- +44 (0)1223 851703

- info@speedwellsoftware.com

Our eSystem exam management software has been focused on improving efficiency and streamlining assessment processes since it was launched. Over the years, we’ve supported countless institutions in enhancing their exams, gaining deeper insights, and delivering higher-quality assessments.

Through close collaboration with our customers and sustained investment in development, our software continually evolves to meet the demands of modern assessment.

As AI rapidly advances and redefines expectations around efficiency and performance by integrating into day-to-day practices, we recognised a clear opportunity to bring the same benefits to assessment, leading to the development of our AI module.

In our earlier blog, AI in Assessment: How technology is shaping better exams, we explored various ways that AI could be harnessed to improve and enhance exam processes. With our AI module, we wanted to focus on an area that would deliver value and assist with one of the biggest pain points our customers face: marking written exam questions.

Addressing the challenge of marking written exams at scale

Whilst it has been possible to automate marking for multiple-choice and very short answer style questions for many years, the same has not been true for written questions, where students need to give more detailed answers. These questions require an examiner to read every candidate’s answer, use their academic judgement, and assess an appropriate mark to give according to a model answer, all while maintaining consistency across candidates.

It’s time-consuming and labour-intensive, and when you add candidates who are increasingly seeking detailed feedback and results delivered to them quickly, it’s no wonder that organisations feel pressured and are looking for solutions to assist. For some, providing detailed feedback, or any feedback at all, is an insurmountable task. They simply do not have the time or the resources to do this at scale.

Our AI marking and feedback features directly address these challenges, delivering real value for both academic departments and candidates. By allowing assessment teams to mark more efficiently and deliver timely, detailed feedback at scale. Plus, candidates benefit from greater insight than before which enhances their overall learning experience.

Available as an additional add-on to an existing eSystem subscription, the AI module fully integrates with the platform. It primarily has two ‘modes’: AI-assisted marking, where AI is used as a digital co-marker, and an AI-Automated Marking evaluation tool, which allows organisations to test and quality assure AI models.

AI-Assisted Marking

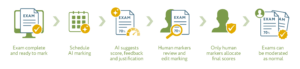

AI-assisted marking is a core component of our AI marking and feedback approach. In this mode, AI assists human markers. Organisations will need to allow AI to be used in the marking process and add AI as a marker in the same way that they would add a human marker. AI marking is then scheduled, and once complete, the exam is ready for marking. When the human marker begins marking, they will be presented with the AI suggestions. This includes a suggested score, feedback, justification and confidence level for each answer. They decide whether to accept, amend or reject the suggested score and feedback, ensuring that each mark and piece of feedback is owned and academic judgement and integrity remain central to the process. AI scores are not allocated to candidates; humans have to review and enter the final mark.

AI-Assisted Marking and Feedback Process Explained:

Using AI marking and feedback tools to support examiners, significantly speeds up the marking process, promotes consistency and allows for quality feedback to be delivered at scale.

It’s important to note that, as with traditional marking, a strong model answer is critical. Without it, AI would rely on the question and its own general knowledge, increasing the risk of inaccuracy and inconsistency. A clear, structured model answer ensures AI adheres to set academic standards and provides a definitive reference for marking decisions, keeping assessment consistent, transparent, and aligned with the intended learning outcomes.

AI-assisted marking can also be applied to Very Short Answer (VSA) questions. Here, AI evaluates all candidate responses and provides a score and a certainty value for each candidate answer. Human markers then confirm or adjust these assessments as needed, ensuring accurate, consistent scoring while significantly reducing the time and effort involved.

AI-Automated Marking Evaluation

In this mode, it’s possible for organisations to automatically mark test exams and data. Intended as a testing and evaluation tool, and not for use in live exams, this mode will allow institutions to test how AI models perform, both against other AI models and against human markers in a controlled environment.

The intention is that it will build confidence in the AI models used for assisting marking in live exams. Assessment teams can ensure that the models used are the ones that perform best and most closely align with the academic objectives. Furthermore, it provides a robust and defensible process for validating AI performance, supporting internal quality assurance and enabling institutions to evidence the reliability of their chosen approach in using AI to assist with marking if needed.

Advancing AI-driven efficiency across assessment processes

As assessment continues to evolve, the role of AI in marking and feedback is set to become an increasingly important part of how institutions manage scale, consistency, and quality. Rather than replacing academic judgement, the focus is on supporting it – providing tools that help markers work more efficiently while maintaining full control over final decisions.

Looking ahead, further AI capabilities will continue to be introduced, with a clear focus on driving efficiency and improving processes – principles that have always been central to the eSystem.

Get in touch

If you would like to find out more about our eSystem, the AI marking and feedback features, or just how we can help you streamline and improve your exam processes. Contact us: Call our sales team at +44 (0) 1223 851703 or email info@speedwellsoftware.com